How We Evaluate LLM Accuracy for Contract Review

LLMs are powerful but inconsistent, and in contract review, small errors can be costly. Here's how Docusign's AI team built a fast, no-code evaluation system to continuously test, measure, and improve the accuracy of our contract review assistant.

Engineers building generative AI products are confronted with a tricky challenge: we’re trying to develop systems that don’t make mistakes, but we’re building on top of LLMs that make a lot of them.

Accuracy and reliability are especially crucial when AI is used to support high-stakes situations like contract negotiations. Even small errors or a missing term can be costly, which is why human review and oversight remain essential.

LLMs like ChatGPT, Claude, and Gemini possess seemingly magical capabilities. But for every example of AI producing a startlingly insightful answer or winning a gold medal at the International Mathematics Olympiad opens in a new tab, there is a competing example of it lacking basic understanding or hallucinating information opens in a new tab out of nowhere. What’s more, LLMs are confidently inaccurate, opaque, and difficult to improve. This makes it uniquely challenging to achieve AI’s promise of saving time and reducing human error.

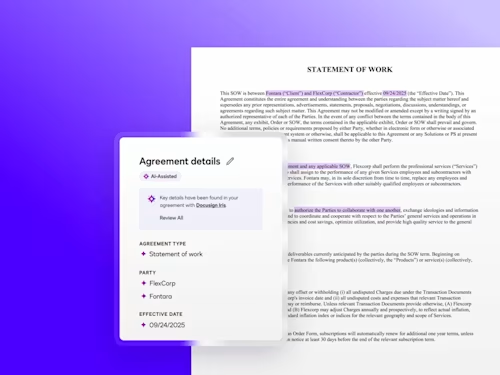

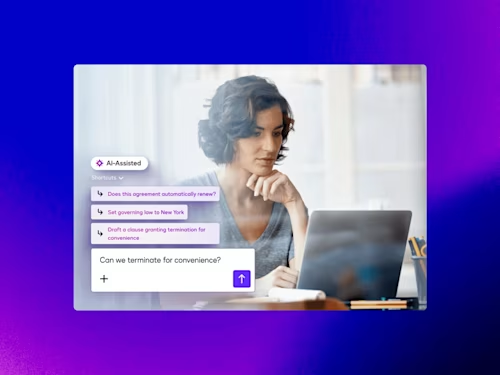

The AI development team at Docusign grappled with this problem firsthand while building Docusign’s contract review assistant, powered by our AI engine called Docusign Iris. The assistant helps speed up contract negotiation by flagging risky or non-standard language and suggesting edits. Our AI assistant is designed to help our customers move quickly while also trusting that no detail is overlooked that could violate their company policies.

In this post, we'll share the insights we learned as we developed the contract review assistant into an AI product that delivers useful suggestions on a consistent basis.

Fast iteration

The inconsistency of LLMs is compounded by the fact that the field of generative AI moves very quickly, and the models and methods that are state-of-the-art change frequently. When a new, more powerful model is released, we want to implement it into our pipeline in days rather than weeks. For instance, we might investigate upgrading to the new model to take advantage of its increased reasoning ability, speed, and output capacity. Our infrastructure allows us to quantify a model’s performance against our historical benchmarks in a matter of hours, ensuring that we have a reliable view of the model’s likely performance on real customer data.

Unfortunately, sometimes a new model introduces new errors for a certain category of data or segment of customers. A model that is bigger and faster might still perform worse on specific legal formatting or niche clause types. And due to the black-box nature of today’s large language models, it can be difficult to know immediately if a new change introduces these regressions. This means it’s critical to implement regular monitoring to inform us not just that the model is returning results, but that the results match what we expect for a variety of common inputs.

No-code testing

The most important factor in the success of the contract review assistant was building a system that allowed us to iterate quickly and try new things with minimal friction. We accomplished this by allowing engineers to easily plug in different models, prompts, hyperparameters, and features to keep pace with the latest research, AI best practices, and our customers’ needs.

Our decision to promote a model into production is driven entirely by its performance in these controlled tests. We have a user interface where engineers can experiment with prompts by simply editing a text box, and then immediately see the impact of their change on our metrics. They can determine at a glance which model or version performs better and, if necessary, dig into the actual model outputs to debug any errors. Engineers and product managers don’t need to write code or go through the deployment process to test these changes, so we’re able to move much faster to try out a new model or prompt that might improve our performance.

Below is a view of the internal dashboard our team uses to vet every change before it reaches a customer (note: the data shown is for illustrative purposes only). Each experiment is tracked visually in the chart at the top, displaying the history of our iterations and the steady improvement in the reliability of our product.

In the table, engineers can compare model versions side-by-side across thousands of test cases, and click on any row to inspect individual examples and find patterns in what we get right or wrong. This on-demand access to detailed performance metrics accessible through any engineer’s local development environment allows us to iterate in hours rather than weeks while ensuring every update maintains our high standards.

Dashboard used by Docusign to vet every change before it reaches a customer

Reliable evaluation

An AI product is only as good as your ability to evaluate it. Without a thorough and realistic evaluation system, you won’t know there is a problem until customers complain, which is way too late. The challenge we faced was determining what we should measure in order to evaluate the performance of a new model

Constructing an evaluation dataset. In an industry with sensitive data, like legal contracts, building models requires a thoughtful approach to data. At Docusign, customer trust and security come first. Any data used to improve our AI models is anonymized and aggregated, and only used with customer consent. We also provide an AI Data Controls setting within Docusign so customers have additional control over sharing data for AI training.

We also limit employee access to customer contracts and have a thorough process for customers to consent to engineers reviewing certain documents in order to improve the quality of their results.

We work with practicing lawyers to learn what answers they would expect, and then evaluate how well our models meet those expectations.

Evaluating Docusign Contract Review Assistant:

10,000+ experiments run

100+ datasets created

2M+ evaluation documents

1,500+ realistic example inputs tested regularly

These numbers reflect data and experiments only used for Docusign AI-Assisted Review.

Deciding what to measure

There’s no single metric that can summarize the performance of our models. The simplest approach would be to measure each model’s accuracy: how often does the system end up with the most useful answer? In the example of the contract review assistant, how often does the tool flag risky language and suggest a reasonable edit to make the language compliant? However, when we spoke to customers about their priorities, we quickly realized that only measuring accuracy would not be enough.

Collaborating with customers

In those conversations, customers told us that in high-stakes situations like contract negotiation, the cost of a false negative (failing to flag risky language) can be far higher than the cost of a false positive (flagging something that isn’t truly risky). This led us to understand that more nuanced metrics such as precision (how often the model is right when it flags a risk) and recall (how many of the total existing risks the model actually caught) are better at differentiating between these types of errors than simply using accuracy.

Furthermore, legal language is often ambiguous, and even experienced lawyers may disagree on how to interpret a term or structure a clause. In such cases, there isn’t a single “correct” answer, which means a simple metric like accuracy can’t capture the full range of valid interpretations. To build our evaluation dataset, we consulted multiple lawyers and reached consensus on each item. What truly matters to customers is not “accuracy” in the abstract, but how effectively the AI helps them achieve their goals: saving time, reducing errors, and ensuring compliance. Metrics should reflect these real-world business objectives.

Prioritizing the metrics

With the contract review assistant, we settled on three core metrics:

Finding: Locating relevant language in the contract, which helps save users time searching the document to see where their question is answered.

Flagging: Identifying and highlighting risky language that violates the customer’s policies.

Fixing: Suggesting high-quality edits to bring the contract language into compliance with the customer’s policies.

For each of these metrics, we don’t just rely on one number. We calculate precision, recall, and F1 score to gain insight into different aspects of the customer experience. Because missing a critical term or potential risk in a negotiation is far more costly than a false alarm, we pay close attention to recall for all of our metrics. At the same time, low precision can overwhelm customers with unnecessary clauses to review, so we carefully balance both.

Tracking these high-level scores gives us good insight into the product’s overall performance, but aggregate numbers aren’t actionable enough to pinpoint exactly what went wrong and how to fix it.

To truly understand a model’s strengths and weaknesses, we break these core metrics down across multiple segments:

Contract type: We track performance on specific types of contracts, like Master Service Agreements and Non-Disclosure Agreements.

Party perspective: We segment metrics based on whether we are reviewing a contract from the first-party or third-party perspective, or from the “buy-side” (procurement) or “sell-side” (sales) point of view. What is considered risky can change depending on the customer’s role.

Document characteristics: We slice our metrics by factors like file format, document length, the presence of tables and images, and other challenging features to ensure our parsing logic holds up in difficult circumstances.

Language: We track how well the model performs on English contracts as well as French, German, Spanish, Brazilian Portuguese, and other languages as we expand support.

Beyond model accuracy, we evaluate the overall health of our product through a combination of operational metrics and expert human review. We closely monitor system health, including latency and uptime, to ensure that an upgrade in model quality doesn’t result in an unacceptable slowdown for our customers. We also actively collect customer feedback and work with practicing lawyers to learn what answers they would expect. These legal experts regularly test the product and flag any nuanced errors they see, ensuring we don’t miss critical issues that are obvious to lawyers but not to engineers.

Since we use a multi-step pipeline, errors at the beginning of the pipeline (like failing to “find” a relevant clause) can propagate and cause us to make mistakes in later steps. Therefore, we treat errors in the initial steps as a higher priority than those later in the pipeline. We also build in ways to recover from early errors if they are causing problems in later steps.

The following chart gives an example of how we track our progress as we test and tune the AI pipeline. Though the data here is just for illustration, the numbers show the kind of “climb” we look for when running experiments.

We start with a baseline - the system currently in production - and then try out different versions to see if we can improve the results across all of our metrics. In reality, progress is rarely this linear; we often have to navigate tradeoffs, such as choosing a model with better recall but slightly lower precision to ensure no risks are missed. By measuring our results at every step, we can ensure that every update is an overall improvement for our customers.

How we track progress as we test and tune the AI pipeline

Conclusion

Building genuinely helpful AI products, especially in sensitive industries that handle agreements, can be challenging. With the creation of the Docusign contract review assistant, we’ve learned the importance of embracing a development process that allows for fast experimentation backed by an evaluation system that reflects real user needs. This approach allows us to implement improvements and fix mistakes quickly, building trust with our users and saving them time in the contract review process.

Allison is a Lead Applied Scientist at Docusign on the team building Docusign AI-Assisted Review. She focuses on natural language understanding for legal workflows, including designing and curating datasets, creating evaluation metrics, and building end-to-end evaluation frameworks to ensure our models deliver reliable results and meaningfully reduce the time lawyers spend on routine document review.

Related posts

Docusign IAM is the agreement platform your business needs