The Duck Talks Back: How I’m Using AI to Navigate AI Code Review

AI code review tools are leaving more comments than ever, but processing them mindlessly (rubber duck debugging) can be just as risky as ignoring them. Docusign engineers share how they built a custom AI agent to turn PR feedback into a real conversation, not just a faster way to click "resolve."

Mason Chinkin, lead software engineer at Docusign, also contributed to this blog.

AI is the newest addition to a developer's toolbox, but unlike a new framework or programming language, nobody shipped a manual with it. As engineers determine AI’s value individually, knowledge sharing becomes mutually beneficial.

This post describes one technique I’ve been using to deploy AI to navigate AI code review. Like most techniques in these still-early days of AI deployment, it’s a starting point, not a prescription.

The review comment marathon

You finish writing your code. The pull request (PR) is open. The reviews start coming in.

Now the real work begins.

For each review comment, an engineer needs to:

Read it carefully

Find the exact line of code it refers to

Reconstruct enough mental context to understand what the reviewer is concerned about

Decide whether the concern is valid

Figure out a fix, and make the change.

Then repeat. Again and again.

Each comment requires a mini context switch. Engineers are not just making code changes, they are constantly rebuilding a mental model of a different part of the codebase, then tearing it down to rebuild the next one. The mental exhaustion of responding to feedback sets in without having even written a single new line of meaningful code.

This is the hidden cost of code review. Not the fixes themselves, but the repeated assembly and disassembly of context around each one.

A new kind of reviewer

Last year at Docusign, our all-seeing internal AI code review tool began reviewing PRs across the company. It integrated into our existing CI/CD infrastructure, uses the codebase, diff, and relevant metadata to leave inline PR comments.

Our AI code review tool – borne out of an internal hackathon – is designed to be thoughtful rather than noisy, leveraging RAG and LLM-as-judge validations to filter out duplicate comments, compiler-detectable issues, and nitpicks. The comments it leaves are substantive and include recommended solutions.

But human reviewers and AI reviewers scale in opposite directions. When a PR is small, human reviewers go deep. They read every line, leave detailed comments, and ask questions. When a PR is large, humans skim. The cognitive load of reviewing dozens of changed files is too high, and comment volume drops.

We looked at 40 recently merged PRs across one of our main repositories. Our code review tool accounted for roughly ⅔ of all review comments across every PR size bucket. On medium-complexity PRs (6-10 changed files), it averaged 8.3 comments while human reviewers averaged 2.3, a more than 3-to-1 gap. In one case, a PR with six changed files and 660 lines added received 36 comments from our AI code review tool, and one human comment.

On the most complex PRs, the ones where good feedback is most needed, an AI bot is often the dominant voice in the review thread. That changes how you need to think about those comments.

The problem with "just fix it"

A well-designed AI reviewer like ours leaves comments that sound authoritative. They are well-reasoned, technically grounded, and often correct. That is exactly what makes them dangerous to process mindlessly.

Comment left by our AI code review tool explaining why (demo) code was potentially wrong

The default workflow for many engineers is: read the comment, assume it is right, apply the change, resolve the thread, move on. It feels efficient. Comments are closed, progress is made, the queue is cleared.

But this process skips the "why." Is the comment actually pointing at the right problem? Is the suggested fix the best fix? Does the concern even apply to specific code?

Automated review tools, no matter how good they are, can miss context, flag outdated patterns, or misread code that was already handled correctly nearby. Blindly applying every suggestion is not better engineering. It is just faster rubber-stamping.

The duck that talks back

To work through PR comments more deliberately, we built a custom VS Code Copilot agent opens in a new tab called PR Review Navigator. It is a markdown file that instructs GitHub Copilot to behave as an interactive review assistant.

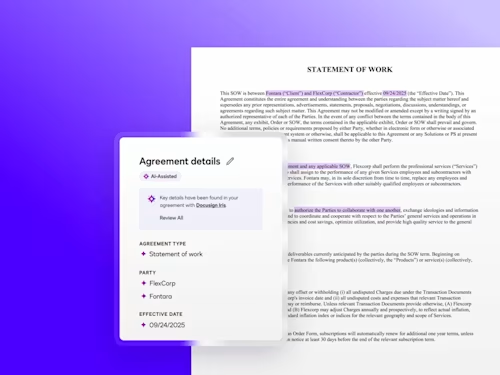

How does it work? When an engineer opens it and gives it a PR number, it fetches all unresolved review threads via the GitHub GraphQL API and presents them one at a time. For each comment it shows the file, the line, the full comment text, surrounding code context, and an AI validity assessment. Then it asks what to do: fix it, ignore it, discuss it further, or skip it for later.

PR Navigator reading over a comment from our code review tool and giving an assessment

The technical mechanics are straightforward. What matters is what it feels like to use it: like rubber duck debugging, except the duck talks back.

Rubber duck debugging opens in a new tab is the practice of explaining code out loud to a rubber duck on your desk. The act of articulating the problem to something external often helps find the answer. The duck does not respond. It just listens.

The PR Review Navigator is a duck that responds. Our engineers read the comment, Navigator absorbs the full codebase context, and a real discussion begins:

"Is this actually a problem in this specific case, or is this a general rule that doesn't apply here?"

"Show me where else this pattern appears in the codebase."

"What would happen downstream if I made this change?"

That discussion is where the value lives. The fix that comes out of it is almost the easy part.

A case study: when our code review tool was right (and deeper than it looked)

Recently, I was adding telemetry to a Jenkins pipeline library. I wrote the feature, wrote 35 Spock unit tests, ran them, and they all passed. The code looked complete.

Our AI code review tool’s comment pointed at my test file:

"Your unit tests reimplement the parsing and aggregation helpers instead of invoking the functions in the production library. A regression in the production code may go unnoticed because your tests validate the duplicated helper implementations, not the actual code running in pipelines."

A test architecture note. Easy to skim past, easy to dismiss as style feedback.

But PR Navigator made it impossible to dismiss. Our AI code review tool had full codebase context, and PR Navigator helped decipher what it was actually saying: 35 tests are passing, and every single one of them is calling methods defined inside the test class itself. If the production parsing logic had a bug, none of those tests would catch it. The test suite was providing zero regression protection.

That is not a style issue. That is a correctness problem.

The real fix was to extract all the pure parsing logic into a new class: TrxParser.groovy with no Jenkins dependencies, fully testable on its own. The production library and the test file both import it. Tests now call the real methods directly. A regression anywhere in the parsing logic will fail a test.

In the same review, our code review tool also caught a cross-platform bug in that new class: using new File(storage).name does not handle Windows-style paths on a Linux JVM, returning the full path string instead of just the filename. The fix the code review tool suggested was implemented verbatim.

Both of these started as what looked like minor code comments. The discussion initiated by PR Navigator revealed what they actually were.

When the AI gets it wrong

The same habit protects in the opposite direction.

Our code review tool flagged a line in a Jenkins pipeline script, arguing that using the % operator on buildNum would invoke Groovy's string formatting behavior rather than arithmetic modulo, since buildNum is a String from the Jenkins environment. This is a genuine Groovy quirk, and the comment sounded completely credible.

But reading just the flagged line in isolation was not enough. When the PR Review Navigator looked at the full method, it found that the code was already calling .toInteger() before applying the % operator. The arithmetic was correct. The code review tool had either reviewed an earlier draft or missed the type conversion call a few characters to the left.

Without the discussion, I would have applied a fix to code that did not need fixing. The Navigator caught it because we looked at the whole method together, not just the snippet. Outcome: ignore. Move on.

The cognitive shift

When an AI handles the mechanical act of writing a fix, an engineer’s brain is freed up for the work that only they can do: questioning the design, evaluating tradeoffs, deciding whether a comment is pointing at the real problem or just a symptom of it.

The PR Review Navigator does not make me faster at closing comments. It makes me spend less time on the mechanical parts of fixing code, so engineers spend more time on design and architecture. Those are two very different things.

Speed-running through a review queue feels productive. But when the feedback is coming from a system that sometimes has deeper insight than it appears – and sometimes has less context than it claims – a thinking partner is needed, not a faster way to click "resolve."

What we learned

A few things became clear after using this approach across enough PRs:

Not all comments deserve equal scrutiny. Our AI code review tool’s comments about syntax and well-known anti-patterns are usually right and fast to apply. Comments that involve broader reasoning about code structure or logic are worth a longer discussion.

The discussion format changes how to think about the codebase. Explaining a comment out loud, even to an AI, surfaces subconscious assumptions.

There are real limitations. PR Review Navigator is only as good as the codebase context the AI can load. Very large monorepos require more care. Session state does not persist between Copilot conversations, so picking up where you left off takes a moment to re-establish.

But the core shift, from processing feedback to discussing it, is something I would not go back from. Give it a try with your next PR, even without a custom tool. Pick one comment. Read it alongside the full method. Ask yourself what you would say to a colleague about it.

The duck might surprise you.

The techniques described in this post reflect his personal workflow and do not represent an official Docusign engineering standard.

Steffen is a Principal Software Engineer at Docusign, where he works on the company’s build and CI/CD systems. He is fascinated by how AI tooling can help engineers become more efficient and frequently experiments with and evangelizes practical AI techniques for developers. His work focuses on making software delivery safer and more reliable at scale, from deployment pipelines to the developer experience around them. At Docusign he also helps teams adopt AI-assisted workflows in ways that are measurable, trustworthy, and grounded in real engineering problems.

Related posts

Docusign IAM is the agreement platform your business needs